PostgreSQL Performance: From Slow Queries to Sub-Millisecond

The Problem

Our API p99 latency had crept up to 2.3 seconds. The culprit? Database queries that had grown with our data.

Step 1: Understanding the Query Planner

Before optimizing anything, we needed to understand what PostgreSQL was actually doing:

EXPLAIN (ANALYZE, BUFFERS, FORMAT TEXT)

SELECT * FROM orders

WHERE user_id = $1

AND status = 'active'

ORDER BY created_at DESC

LIMIT 20;The output revealed sequential scans on a 50M row table.

Step 2: Strategic Indexing

We created composite indexes that matched our query patterns:

CREATE INDEX CONCURRENTLY idx_orders_user_status_created

ON orders (user_id, status, created_at DESC);Key insight: column order in composite indexes matters. Put equality conditions first, range conditions last.

Step 3: Connection Pooling with PgBouncer

We switched from direct connections to PgBouncer in transaction mode. This alone reduced our connection overhead by 80%.

Step 4: Partitioning

For our largest tables, we implemented range partitioning by date:

CREATE TABLE orders (

id BIGSERIAL,

created_at TIMESTAMPTZ NOT NULL,

-- ...

) PARTITION BY RANGE (created_at);Results

- p99 latency: 2.3s → 12ms

- Average query time: 450ms → 0.8ms

- Database CPU: 85% → 15%

The lesson: most performance problems are solved with better indexing, not bigger hardware.

Related Projects

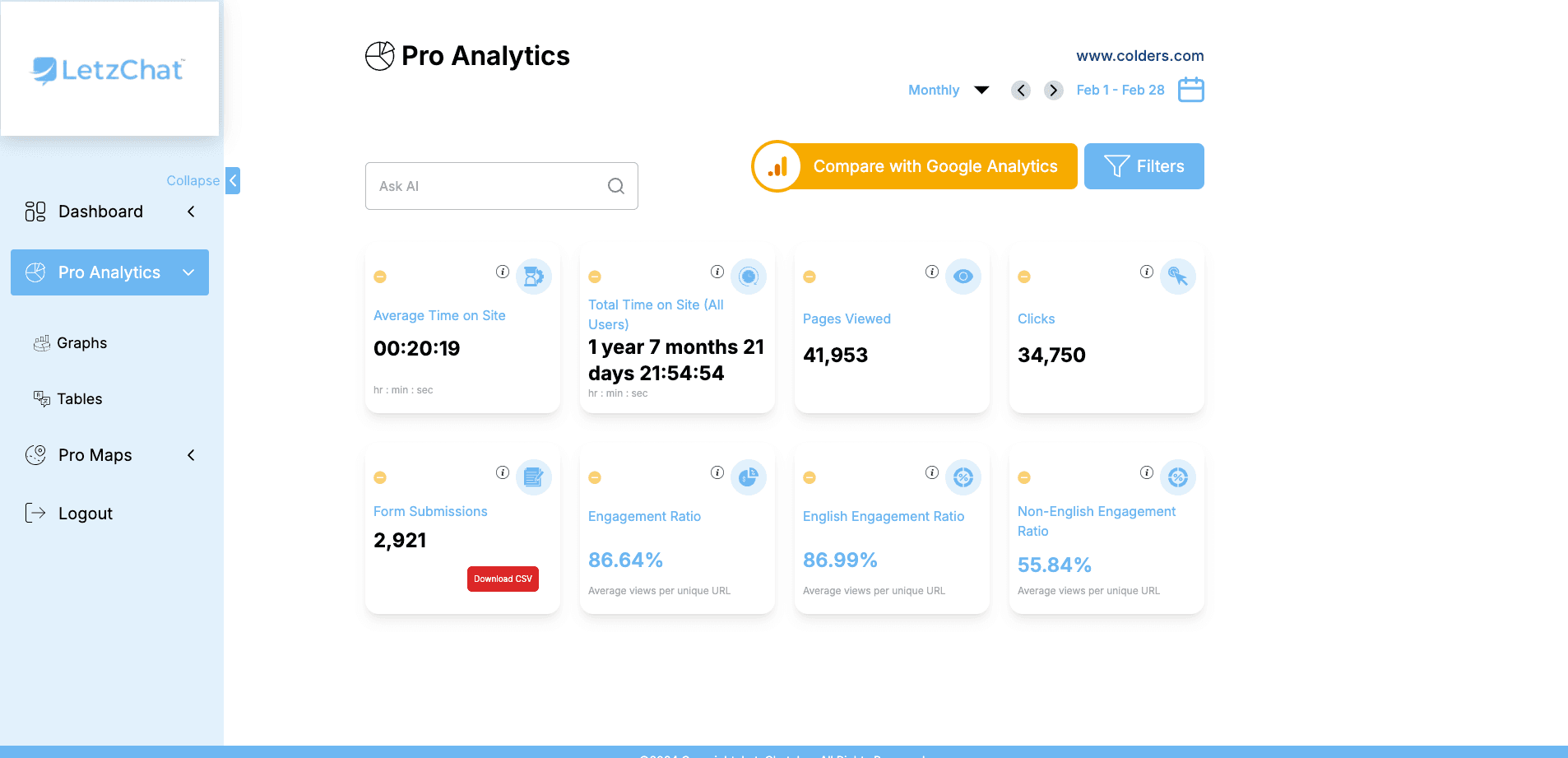

LetzChat – Enterprise Multilingual Translation & Communication Platform

Complete enterprise translation ecosystem — featuring real-time analytics (300M+ events/month), AI-powered chat, voice/video dubbing, live call translation, podcast/Zoom integration, glossary management, subtitle generation, and comprehensive analytics — breaking language barriers across all communication channels.

GenderRecognition.com: AI-Driven Gender Detection Solutions

State-of-the-art AI-powered gender detection platform processing images, videos, text, and voice data in real-time — built with privacy compliance, bias mitigation, and enterprise-level scalability. Includes comprehensive admin panel managing 2,800+ users and 33,000+ API calls.

GPTTranslator.co - AI-Powered Multilingual Translation Platform

AI-driven multilingual translation SaaS platform delivering high-accuracy translation for text, documents, web content, and advanced admin intelligence dashboards.

Related Articles

Point of Sale (POS) System MVP: Revolutionizing Retail Operations with Simplicity and Functionality

A case study on building a Java-based POS MVP for small and medium retailers, featuring sales processing, inventory management, reporting, and secure user authentication.

React Server Components in Production: What We Learned

After migrating a large React app to Server Components, here are the real-world trade-offs, performance wins, and pitfalls we encountered.