LESCO Bill Scraper - Automating Utility Bill Verification for Efficiency

The LESCO Bill Scraper is a specialized automation tool developed to streamline utility bill payment verification by extracting consumer numbers from bulk PDFs and checking payment statuses on the LESCO website.

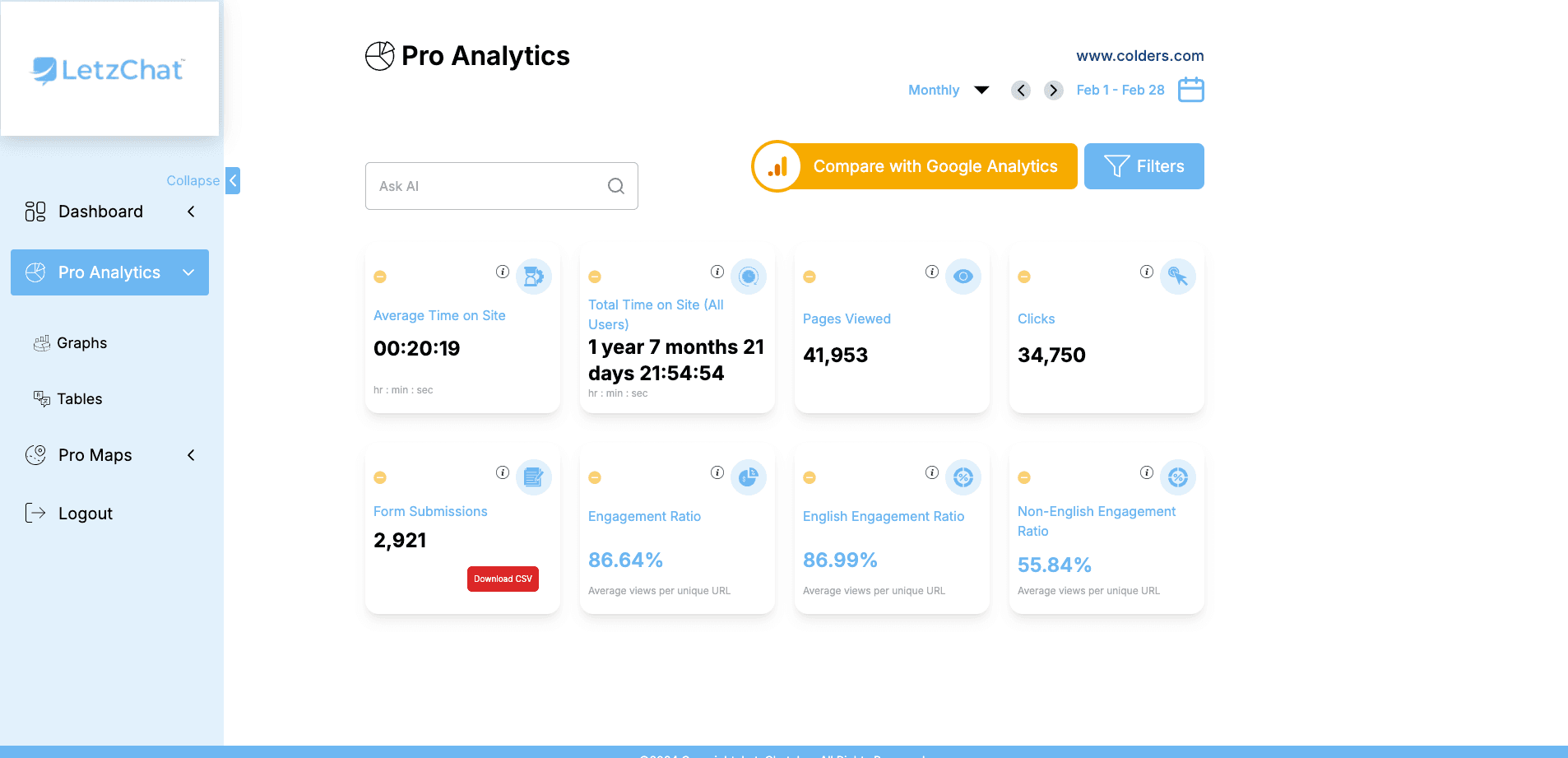

Screenshots

Overview

Title: LESCO Bill Scraper - Automating Utility Bill Verification for Efficiency

Industry: Utilities and Automation

Project Category: Web Application

Project Duration: 3 Week

Project Cost: $2000

Project Started On: July 2018

Role: Team Lead & Developer

Live URL: https://github.com/hamzaig/lesco-account-status-checking

Tags: • Python • Selenium • BeautifulSoup • PDF Parsing • Automation • Web Scraping • Operational Efficiency

Description: The LESCO Bill Scraper is a specialized automation tool developed to streamline the process of verifying utility bill payments for businesses and property management firms. By automating the extraction of consumer numbers from bulk PDF files and checking payment statuses on the LESCO website, this tool reduces manual effort, ensures accuracy, and enhances operational efficiency.

Problem: Businesses and property management firms face a significant challenge in manually verifying whether utility bills have been paid. The manual process is time-consuming, error-prone, and inefficient, especially when handling a large volume of accounts.

Solution: The LESCO Bill Scraper is a Python-based automation tool designed to address these challenges. Key functionalities include: • PDF Parsing: Extracting consumer numbers and bill details from bulk PDF files using Python libraries. • Web Scraping: Automating the navigation of the LESCO website to input consumer numbers and retrieve payment statuses using Selenium. • Data Management: Efficiently handling extracted data, logging payment statuses, and generating structured reports for analysis.

Technologies Used: • Python: For core development and library integration. • Selenium: For automating web navigation and interactions. • BeautifulSoup: For parsing and extracting HTML content from web pages. • Amazon EC2: For deployment and hosting. • Node.js & Puppeteer: Supporting technologies for additional backend automation tasks.

Impact: • Operational Efficiency: Automated the utility bill verification process, reducing hours of manual work to just a few minutes. • Accuracy: Minimized human error, ensuring reliable and timely verification. • Resource Optimization: Freed up employee time for strategic and value-added activities. • Cost Savings: Helped businesses avoid late payment penalties by ensuring timely verification.

The LESCO Bill Scraper demonstrates the power of automation in solving routine operational challenges, paving the way for broader digital transformation initiatives.

GitHub Repository: LESCO Bill Scraper

Key Highlights

- Automated utility bill verification workflow from PDF extraction to payment-status checks

- Bulk PDF parsing to extract consumer numbers and bill details with high reliability

- Selenium + BeautifulSoup pipeline for end-to-end web automation and status retrieval

- Structured report generation for payment tracking and operational decision support

- Reduced hours of manual verification effort to minutes

- Improved accuracy, reduced penalties risk, and optimized team productivity

Tech Stack

Related Projects

LetzChat – Enterprise Multilingual Translation & Communication Platform

Complete enterprise translation ecosystem — featuring real-time analytics (300M+ events/month), AI-powered chat, voice/video dubbing, live call translation, podcast/Zoom integration, glossary management, subtitle generation, and comprehensive analytics — breaking language barriers across all communication channels.

COVID-19 Vaccination Card Digitization

This project was initiated to address the logistical and durability challenges of standard-sized COVID-19 vaccination certificates by converting government-issued PDFs into portable, double-sided ID cards.

Bill Payment Automation System Using Python, Barcode Integration & OCR

A desktop automation system that processes 500-700 utility bills with barcode extraction, OCR-based verification, and automated NADRA e-Sahulat workflow handling to reduce manual effort and payment risk.

Related Blog Posts

LESCO Bill Scraper: Transforming Utility Bill Verification with Automation

A case study on building a Python-based LESCO bill verification automation tool that parses bulk PDFs, checks payment status online, and generates structured reports.

Bill Payment Automation System Using Python, Barcode Integration and OCR

A case study on automating high-volume utility bill payments through NADRA e-Sahulat using Python, barcode parsing, and OCR-based verification.

COVID-19 Vaccination Card Digitization: A Python-Powered Transformation

A case study on automating the conversion of Pakistan COVID-19 vaccination PDFs into compact double-sided ID cards using Python, PyPDF2, and Pillow.