VoiceDubbing.ai: AI-Powered Voice Dubbing for Seamless Multilingual Audio

Introduction

Content distribution is global, but language barriers still limit reach, engagement, and monetization. Whether for creators, studios, educators, or enterprise training teams, traditional dubbing workflows are expensive, slow, and difficult to scale.

VoiceDubbing.ai was built to solve this problem through AI-powered multilingual dubbing with expressive voice output, precise timing, and cloud-scale processing.

This project combines modern speech AI, workflow automation, and production-ready infrastructure to make high-quality localization faster and more accessible.

Project Overview

VoiceDubbing.ai is a next-generation dubbing platform designed for seamless multilingual audio generation across video and audio content.

Core goals:

- Reduce dubbing turnaround from weeks to minutes

- Preserve emotion, tone, and speaker intent

- Support multilingual and accent-aware localization

- Deliver studio-grade output quality

- Enable automation through APIs for enterprise workflows

I contributed as a core developer, focusing on backend architecture, AI integration, and scalable cloud processing.

The Problem with Traditional Dubbing

Legacy dubbing pipelines create recurring operational constraints:

- High production costs from voice talent, studio sessions, and post-production

- Slow execution due to manual recording and synchronization

- Inconsistent emotional quality in low-end synthetic outputs

- Difficulty scaling across many languages and regional variations

For organizations localizing large content libraries, these constraints directly affect speed-to-market and content ROI.

The Solution: VoiceDubbing.ai

VoiceDubbing.ai automates end-to-end dubbing using advanced speech synthesis and intelligent workflow orchestration.

The platform provides:

- AI-driven expressive voice generation

- Multilingual and dialect-aware dubbing

- Automatic alignment with video timing and lip movement

- Script-to-voice workflows through text-to-speech

- Custom voice models and voice cloning support

- Cloud-native bulk processing for creators and enterprises

Key Features

1. AI-Driven Voice Dubbing

The platform uses modern speech synthesis models to produce natural-sounding voiceovers that retain emotional context and speaker intent.

2. Multilingual and Accent Support

VoiceDubbing.ai supports a wide language range, including major global languages and regional accents for authentic localization outcomes.

3. Emotionally Expressive AI Voices

Generated voices can express emotional states such as excitement, seriousness, urgency, or calm delivery, depending on script context and target audience.

4. Seamless Video Integration and Lip-Sync

Dubbed output is synchronized to source timing to maintain natural pacing and accurate audiovisual alignment for media-grade delivery.

5. Text-to-Speech Conversion

Users can convert scripts directly into high-quality voiceovers, enabling fast narration production without manual recording.

6. Custom Voice Models and Voice Cloning

Organizations can build voice identities for branded content and personalized experiences, including controlled voice cloning scenarios.

7. Cloud-Based Scalability

Processing and storage run on scalable cloud infrastructure, making high-volume dubbing workflows fast, reliable, and operationally efficient.

8. Studio-Grade Output Quality

The platform supports clean audio output with enhancement controls and optional background elements for professional final delivery.

Technical Implementation

Backend: FastAPI

The backend is built on FastAPI for high-throughput request handling and low-latency orchestration of speech and dubbing pipelines.

Key responsibilities:

- Job orchestration and queue handling

- Model inference workflow coordination

- Asset lifecycle management

- API-first integration for external systems

Frontend: Next.js

A modern Next.js interface provides:

- Project upload and management flows

- Script and language configuration

- Preview and refinement controls

- Responsive cross-device usability

AI and Machine Learning

The system combines text-to-speech, neural voice cloning, and context-aware model behavior for realistic dubbing output.

Implemented capabilities:

- Emotion-aware synthesis

- Tone and style conditioning

- Dataset-driven tuning for domain-specific use cases

API and Workflow Automation

Developer-friendly APIs allow embedding VoiceDubbing.ai inside media pipelines, CMS systems, learning platforms, and enterprise localization stacks.

Cloud Infrastructure and Security

Cloud deployment supports:

- Elastic scaling for burst workloads

- High-availability processing pipelines

- Secure asset handling and access control

- Privacy-aware data processing for sensitive media

Product Experience and UX

VoiceDubbing.ai was designed for fast onboarding and low-friction execution:

- Clear landing page communicating value and workflows

- Intuitive dashboard for job control and output previews

- Quick controls for voice, speed, language, and style adjustments

- Simple export flow for production-ready media assets

Who Benefits

Content Creators

- Localize YouTube videos, podcasts, and tutorials quickly

- Expand global reach without traditional dubbing overhead

Media and Entertainment Teams

- Dub films, series, and documentaries efficiently

- Maintain timing and emotional continuity in target languages

E-Learning Platforms

- Build multilingual course narration and interactive content

- Improve accessibility for international learners

Corporate Training and Internal Communications

- Localize training assets and webinars for global teams

- Maintain brand-consistent voice experiences

Business Value and Differentiation

VoiceDubbing.ai delivers three major advantages:

| Value Area | Outcome |

|---|---|

| Speed | Automation reduces dubbing turnaround dramatically |

| Cost Efficiency | Lower dependency on studio-heavy manual workflows |

| Scalability | Supports both creator-scale and enterprise-scale localization |

Additional differentiators:

- Strong synchronization accuracy

- Expressive non-robotic voice quality

- API-first extensibility for product integration

Challenges Solved During Development

- Balancing inference quality with processing speed

- Maintaining synchronization across variable speech patterns

- Preserving emotion across language transformations

- Building predictable performance under high concurrent load

These challenges were addressed through iterative model tuning, optimized pipeline orchestration, and cloud-aware scaling strategies.

Future Roadmap

Planned enhancements include:

- Real-time dubbing for live streams and virtual events

- Advanced voice modulation (pitch, tempo, speaking style controls)

- Hybrid AI + human voice marketplace workflows

- Deeper context intelligence for domain-specific dubbing quality

Conclusion

VoiceDubbing.ai demonstrates how AI can fundamentally improve multilingual media production by making dubbing faster, more expressive, and more scalable.

By combining FastAPI backend performance, Next.js usability, neural voice technologies, and cloud-native architecture, the platform enables creators, educators, and enterprises to localize content for global audiences with higher efficiency and quality.

Related Projects

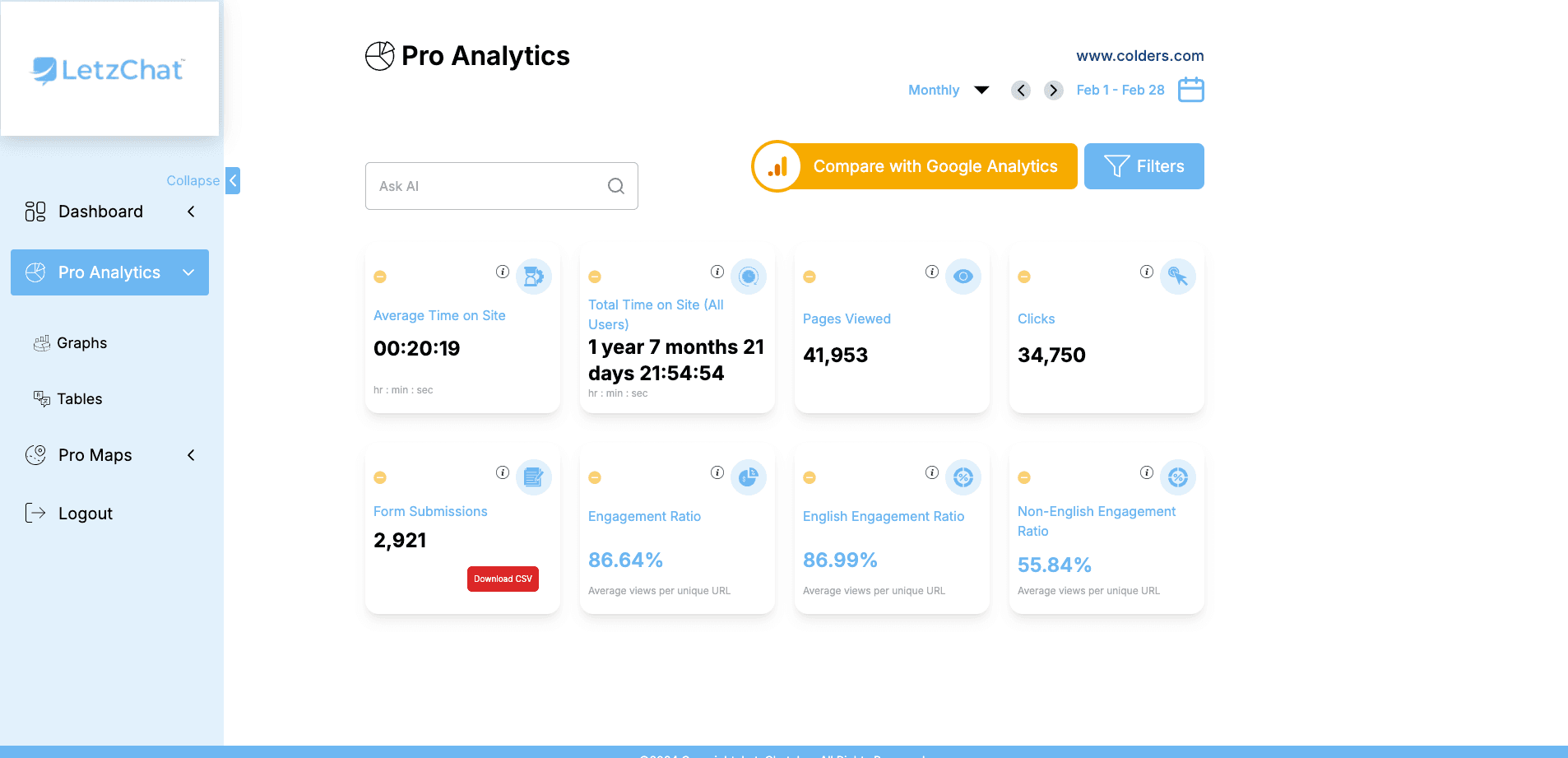

LetzChat – Enterprise Multilingual Translation & Communication Platform

Complete enterprise translation ecosystem — featuring real-time analytics (300M+ events/month), AI-powered chat, voice/video dubbing, live call translation, podcast/Zoom integration, glossary management, subtitle generation, and comprehensive analytics — breaking language barriers across all communication channels.

VoiceDubbing.ai – AI-Powered Voice Dubbing for Seamless Multilingual Audio

AI-powered dubbing SaaS platform that delivers multilingual, emotionally expressive voiceovers with automation, lip-sync precision, and cloud-scale processing.

Levate.ai - AI-Driven Hotel Revenue Optimization Platform

Advanced AI-powered hospitality revenue platform built to maximize hotel profitability through dynamic pricing, smart upselling, and real-time market intelligence.

Related Articles

GenderRecognition.com: AI-Driven Gender Detection for Smarter Insights

Building a state-of-the-art AI platform that provides accurate, scalable, and privacy-compliant gender recognition solutions across multiple industries using deep learning, computer vision, and multi-modal AI.

Levate.ai: AI-Driven Hotel Revenue Optimization Platform

A case study on Levate.ai, a FastAPI and Next.js platform that uses AI-powered dynamic pricing and intelligent upselling to help hotels increase revenue and improve guest experience.

Video Dubbing and Voice Cloning System: AI-Powered Content Localization

A case study on building an AI-powered video dubbing and voice cloning platform that translates content across languages while preserving speaker identity, emotion, and lip-sync quality.